WebRTC

WebRTC模拟传输视频流,video通过本地节点peer传输视频流

更新日期: 2021-12-16

2021-12-16 更新参考

2021-12-15 创建文档

video -> video

在网页上,video传输视频到另一个video。

html

video用source设定视频路径

< video id = "fromVideo" playsinline controls loop muted >

< source src = "../../src/video/s1.mp4" type = "video/mp4" >

< p > 当前浏览器不支持视频播放</ p >

</ video >

< video id = "toVideo" playsinline autoplay ></ video >

js

需要监听canplay事件。

'use strict' ;

const srcVideo = document . getElementById ( 'fromVideo' );

const toVideo = document . getElementById ( 'toVideo' );

srcVideo . addEventListener ( 'canplay' , () => {

let stream ;

const fps = 0 ;

if ( srcVideo . captureStream ) {

stream = srcVideo . captureStream ( fps );

} else if ( srcVideo . mozCaptureStream ) {

stream = srcVideo . mozCaptureStream ( fps );

} else {

console . error ( 'rustfisher.com: 不支持captureStream方法!' );

stream = null ;

}

toVideo . srcObject = stream ;

});

captureStream方法返回一个MediaStream 对象,拥有实时数据流。

参考HTMLMediaElement.captureStream()

本地直接打开html,captureStream(fps)方法报错

示例效果链接

模拟连接节点

前面是从一个video传到另一个video。现在我们来模拟通过RTCPeerConnection 来传输视频流。

准备工作

html上摆放2个video,第一个fromVideo作为源,toVideo显示接收后的视频

< div id = "container" >

< video id = "fromVideo" playsinline controls muted >

< source src = "../../src/video/v5_1.mp4" type = "video/mp4" >

< p > 当前浏览器不支持视频播放</ p >

</ video >

< video id = "toVideo" playsinline autoplay controls ></ video >

< p > 受网速影响,视频加载需要一些时间</ p >

</ div >

< script src = "../../src/js/adapter-2021.js" ></ script >

< script src = "js/pc.js" async ></ script >

网速好的也可以用webrtc.github.io adapter

< script src = "https://webrtc.github.io/adapter/adapter-latest.js" ></ script >

js中拿到这2个video元素

'use strict' ;

const srcVideo = document . getElementById ( 'fromVideo' );

const toVideo = document . getElementById ( 'toVideo' );

获取视频流

数据流从一个节点传输到另一个节点,先定义变量

let stream ; // 源头的数据流

let pc1 ; // 源

let pc2 ; // 目标

const offerOptions = {

offerToReceiveAudio : 1 ,

offerToReceiveVideo : 1

};

let startTime ; // 记录的开始时间

从srcVideo拿视频流。

function tryGetStream () {

if ( stream ) {

console . log ( '已经有视频流了,这里跳过' );

return ;

}

if ( srcVideo . captureStream ) {

stream = srcVideo . captureStream ();

console . log ( 'captureStream获取到了视频流' , stream );

call ();

} else if ( srcVideo . mozCaptureStream ) {

stream = srcVideo . mozCaptureStream ();

console . log ( 'mozCaptureStream()获取到了视频流' , stream );

call ();

} else {

console . log ( '不支持 captureStream()' );

}

}

srcVideo . oncanplay = tryGetStream ; // 监听播放事件

if ( srcVideo . readyState >= 3 ) {

console . log ( '已经可以播放视频了 直接去拿视频流' );

tryGetStream ();

}

srcVideo . play (); // 开始播放

captureStream方法获取视频流。

call()方法在后面。

监听toVideo的一些事件

toVideo . onloadedmetadata = () => {

console . log ( `toVideo onloadedmetadata 宽高 ${ toVideo . videoWidth } px, ${ toVideo . videoHeight } ` );

};

toVideo . onresize = () => {

console . log ( `toVideo onresize: ${ toVideo . videoWidth } x ${ toVideo . videoHeight } ` );

if ( startTime ) {

const elapsedTime = window . performance . now () - startTime ;

console . log ( 'resize耗时: ' + elapsedTime . toFixed ( 3 ) + 'ms' );

startTime = null ; // 消耗掉这个开始时间标志

}

};

传输

新建2个RTCPeerConnection ,开始传输。

function call () {

console . log ( 'call 开始.....' );

startTime = window . performance . now ();

const videoTracks = stream . getVideoTracks ();

const audioTracks = stream . getAudioTracks ();

if ( videoTracks . length > 0 ) {

console . log ( `使用的视频设备: ${ videoTracks [ 0 ]. label } ` );

}

if ( audioTracks . length > 0 ) {

console . log ( `使用音频设备: ${ audioTracks [ 0 ]. label } ` );

}

const servers = null ;

pc1 = new RTCPeerConnection ( servers );

console . log ( '创建本地节点pc1' );

pc1 . onicecandidate = e => onIceCandidate ( pc1 , e );

pc2 = new RTCPeerConnection ( servers );

console . log ( '创建远端节点pc2' );

pc2 . onicecandidate = e => onIceCandidate ( pc2 , e );

pc1 . oniceconnectionstatechange = e => onIceStateChange ( pc1 , e );

pc2 . oniceconnectionstatechange = e => onIceStateChange ( pc2 , e );

pc2 . ontrack = gotRemoteStream ;

stream . getTracks (). forEach ( track => pc1 . addTrack ( track , stream ));

console . log ( 'pc1加载视频流' );

console . log ( 'pc1 创建offer' );

pc1 . createOffer ( onCreateOfferSuccess , onCreateSessionDescriptionError , offerOptions );

}

function onCreateSessionDescriptionError ( error ) {

console . log ( `创建会话描述出错: ${ error . toString () } ` );

}

function onCreateOfferSuccess ( desc ) {

console . log ( `Offer from pc1 ${ desc . sdp } ` );

console . log ( 'pc1 setLocalDescription start' );

pc1 . setLocalDescription ( desc , () => onSetLocalSuccess ( pc1 ), onSetSessionDescriptionError );

console . log ( 'pc2 setRemoteDescription start' );

pc2 . setRemoteDescription ( desc , () => onSetRemoteSuccess ( pc2 ), onSetSessionDescriptionError );

console . log ( 'pc2 createAnswer start' );

pc2 . createAnswer ( onCreateAnswerSuccess , onCreateSessionDescriptionError );

}

function onSetLocalSuccess ( pc ) {

console . log ( ` ${ getName ( pc ) } setLocalDescription complete` );

}

function onSetRemoteSuccess ( pc ) {

console . log ( ` ${ getName ( pc ) } setRemoteDescription complete` );

}

function onSetSessionDescriptionError ( error ) {

console . log ( `Failed to set session description: ${ error . toString () } ` );

}

function gotRemoteStream ( event ) {

if ( toVideo . srcObject !== event . streams [ 0 ]) {

toVideo . srcObject = event . streams [ 0 ];

console . log ( 'pc2 收到远端数据流' , event );

}

}

function onCreateAnswerSuccess ( desc ) {

console . log ( `Answer from pc2: ${ desc . sdp } ` );

console . log ( 'pc2 setLocalDescription start' );

pc2 . setLocalDescription ( desc , () => onSetLocalSuccess ( pc2 ), onSetSessionDescriptionError );

console . log ( 'pc1 setRemoteDescription start' );

pc1 . setRemoteDescription ( desc , () => onSetRemoteSuccess ( pc1 ), onSetSessionDescriptionError );

}

function onIceCandidate ( pc , event ) {

getOtherPc ( pc ). addIceCandidate ( event . candidate )

. then (

() => onAddIceCandidateSuccess ( pc ),

err => onAddIceCandidateError ( pc , err )

);

console . log ( ` ${ getName ( pc ) } ICE candidate: ${ event . candidate ? event . candidate . candidate : '(null)' } ` );

}

function onAddIceCandidateSuccess ( pc ) {

console . log ( ` ${ getName ( pc ) } addIceCandidate success` );

}

function onAddIceCandidateError ( pc , error ) {

console . log ( ` ${ getName ( pc ) } failed to add ICE Candidate: ${ error . toString () } ` );

}

function onIceStateChange ( pc , event ) {

if ( pc ) {

console . log ( ` ${ getName ( pc ) } ICE state: ${ pc . iceConnectionState } ` );

console . log ( 'ICE state change event: ' , event );

}

}

function getName ( pc ) {

return ( pc === pc1 ) ? 'pc1' : 'pc2' ;

}

function getOtherPc ( pc ) {

return ( pc === pc1 ) ? pc2 : pc1 ;

}

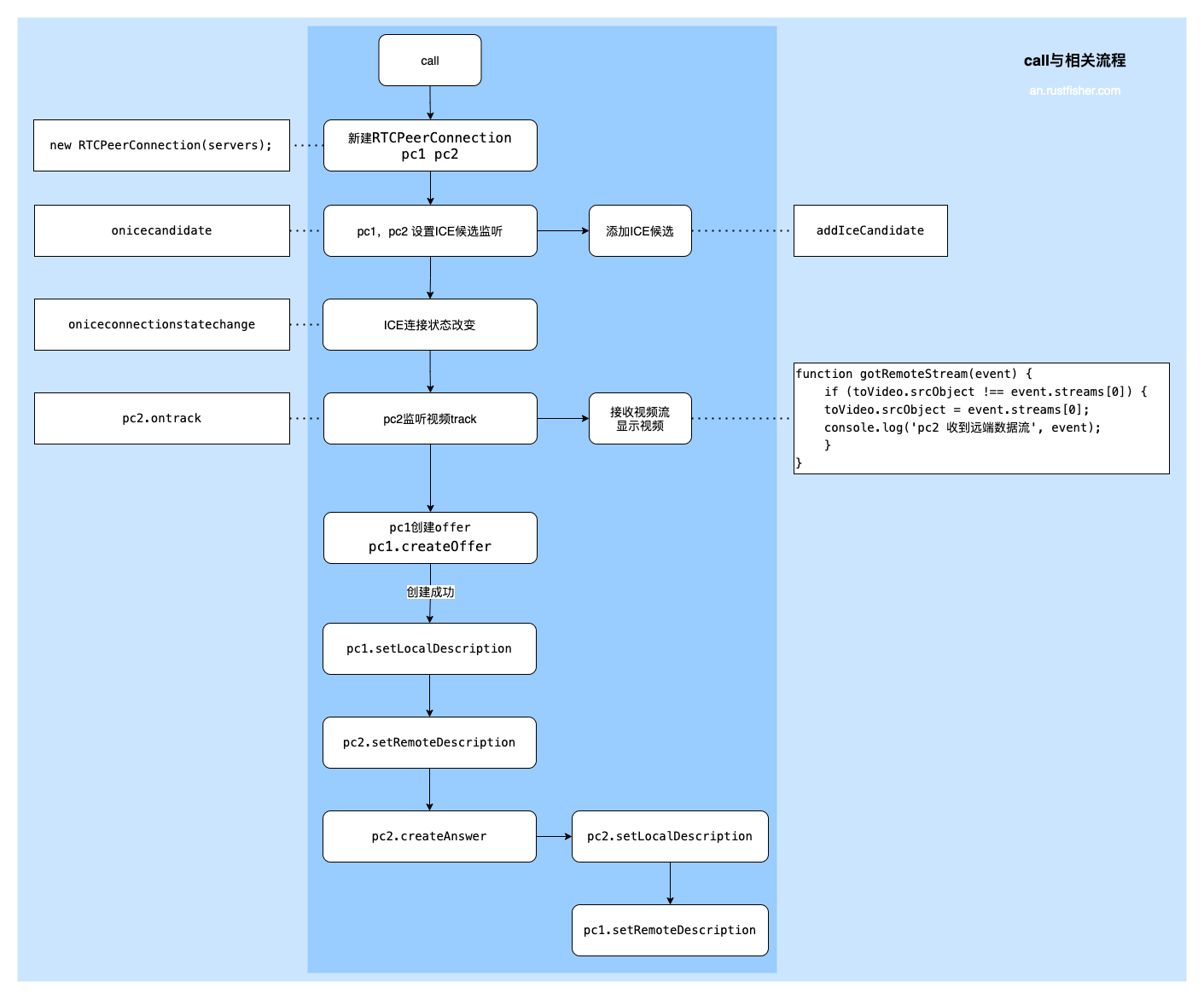

call()方法发起模拟传输的流程。新建节点后,设置ICE相关接听。监听ICE候选变化和连接状态变化。

pc1创建offer,pc2应答(answer)。pc2拿到视频流后,直接显示出来。

call与相关流程图示

效果

示例效果请参考链接https://www.an.rustfisher.com/webrtc/capture/video-local-peer/pc.html

小结

本文的两个例子还没有用到真正的传输服务 ,因此是模拟进行视频流的传输。可以熟悉一下video元素以及captureStream的用法,和创建节点和设置ICE的流程。

WebRTC系列

参考

本文链接

本文也发布在

华为云社区

知乎

本站说明

一起在知识的海洋里呛水吧。广告内容与本站无关。如果喜欢本站内容,欢迎投喂作者,谢谢支持服务器。如有疑问和建议,欢迎在下方评论~

📖AndroidTutorial

📚AndroidTutorial

🙋反馈问题

🔥最近更新

🍪投喂作者

Ads